You can't manage what you can't see. And right now, across Canadian municipalities, nobody is looking.

While city leaders debate where to deploy AI to improve citizen services, a silent crisis is unfolding behind the scenes: employees are quietly using unapproved AI tools to draft policies, analyze data, summarize confidential reports, and assist with decision-making—often without any visibility, oversight, or governance framework in place.

This phenomenon, known as Shadow AI, represents one of the most urgent governance challenges facing Canadian municipalities in 2026. Unlike shadow IT—which emerged gradually in the enterprise world—shadow AI has exploded with remarkable speed, driven by the simplicity of consumer AI tools and the pressure to "work smarter."

The stakes for municipal governments are extraordinarily high. Every unauthorized AI interaction with citizen data, confidential policy documents, or procurement records represents a potential compliance violation, security breach, reputational risk, and erosion of public trust. Yet most municipalities remain blind to the extent of the problem.

The Shadow AI Governance Gap: By the Numbers

The evidence is stark. According to recent research:

- 73% of municipal leaders either have no AI policy or are unsure whether one exists [1]

- 80% of municipal boards provide no AI-specific training for members [1]

- 77% of municipal boards have not addressed AI's ethical concerns [1]

- 32% of local governments report having no formal policies or guidelines for AI use [2]

- Only one-third consider themselves "very prepared" from a privacy and cybersecurity standpoint [2]

- Over 50% of organizations have at least one shadow AI application operating without governance [3]

But perhaps the most revealing statistic comes from enterprise security research: over 60% of enterprise SaaS and AI applications lack any governance whatsoever [4].

In municipal government, where transparency, accountability, and public trust are foundational, this governance vacuum is catastrophic.

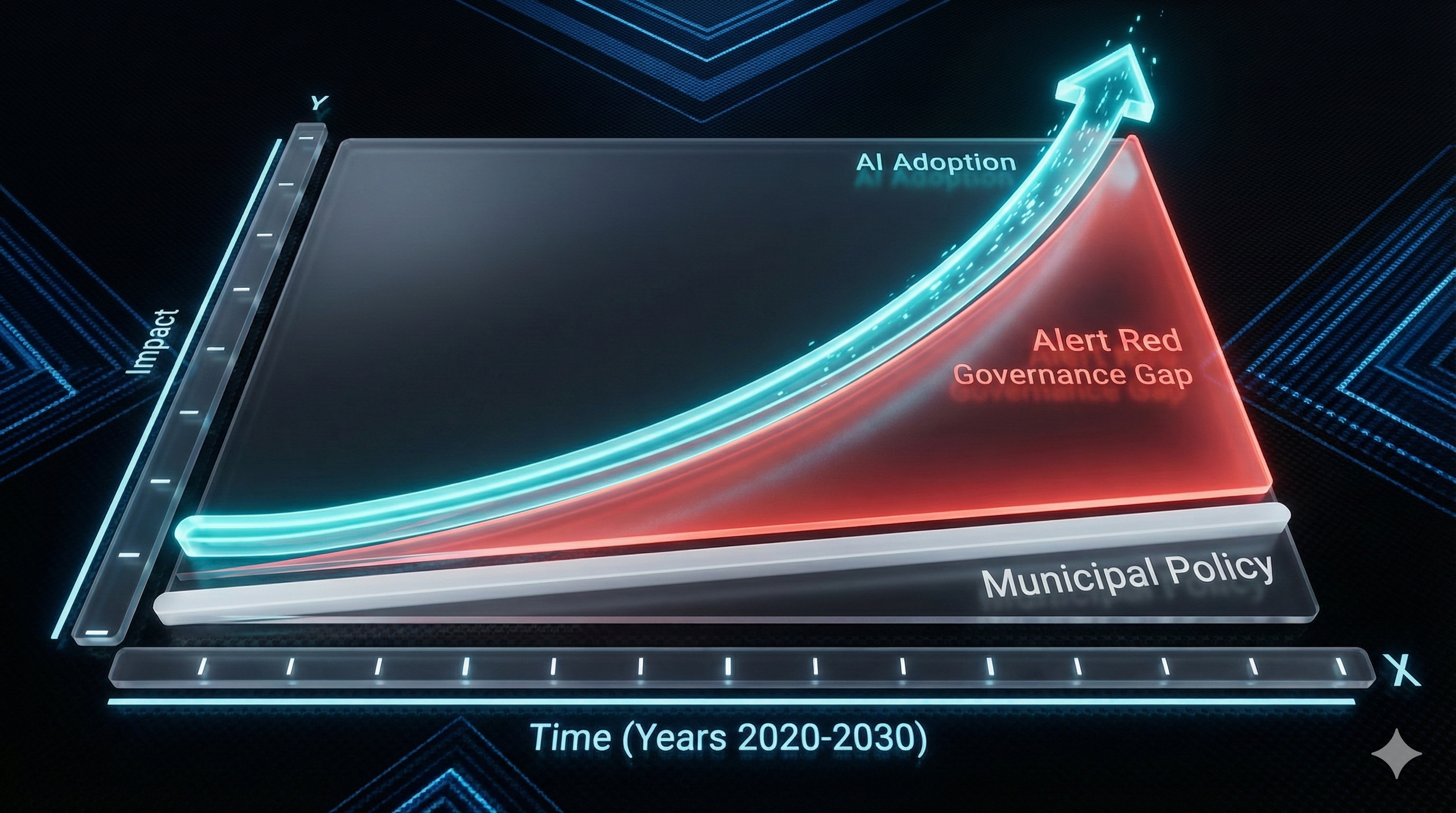

The problem isn't that municipalities lack intelligent leaders. It's that AI adoption has moved faster than governance frameworks. Employees discovered tools like ChatGPT, Claude, and dozens of other generative AI platforms can dramatically accelerate their work. They began using them quietly—to draft memos, analyze datasets, respond to public inquiries, draft council communications. In many cases, no one asked permission. No one checked whether it was allowed. In most cases, because no formal policy existed, there was nothing to break.

This is Shadow AI. And it's transforming the risk landscape for every municipality in Canada.

Why Shadow AI Matters More in Government Than in Business

In the private sector, shadow AI creates data security, compliance, and IP risks. For government institutions, the implications are far more serious.

Compliance and Legal Exposure

Canadian municipalities operate under provincial freedom of information legislation, federal PIPEDA requirements, and increasingly, emerging AI-specific regulations. Ontario's Bill 194 explicitly seeks to regulate AI use by public sector entities, including municipalities, setting out requirements for transparency, accountability, risk management, and oversight [5].

When employees use unapproved AI tools to process citizen data or draft official communications:

- FOIA obligations become impossible to meet — who can retrieve AI-generated content?

- Audit trails disappear — shadow tools leave no records

- Legal defensibility erodes — how can a municipality prove it acted responsibly?

- Breach notifications become complicated — was sensitive data exposed to external AI providers?

Accountability and Democratic Legitimacy

Municipalities exist to serve the public trust. Citizens expect transparency in how decisions are made. When AI systems influence municipal decisions—whether in permitting, resource allocation, or policy recommendations—citizens have a right to know how those decisions were made.

Shadow AI systems, by definition, operate without transparency. Residents never know if their permit was delayed by an AI bias, or if their property tax assessment was influenced by an algorithm trained on outdated data. This erosion of transparency corrodes the legitimacy of municipal government itself.

Risk Concentration in Critical Operations

A 2026 analysis by the Government of Canada identifies agentic AI—systems that make autonomous decisions and take actions with minimal human intervention—as a defining trend [6]. These systems are already being piloted in permitting, social services, and public safety applications.

If municipalities deploy autonomous AI systems without proper governance frameworks in place, the risks compound exponentially. A biased algorithm making hiring decisions, an AI system incorrectly flagging residents for benefit denial, or an autonomous permitting system rejecting applications based on faulty logic—these aren't theoretical scenarios. They're the next chapter of municipal AI deployment.

And without governance frameworks, municipalities are walking blind into this future.

Figure 1: The widening gap between rapid AI adoption and slow governance implementation.

The Four Dimensions of the Shadow AI Governance Gap

Research from the U.S. Government Accountability Office, Canada's proposed Artificial Intelligence and Data Act (AIDA), and leading municipal frameworks identify four critical dimensions where Canadian municipalities are failing:

1. Visibility and Discovery

Most municipalities don't know which AI tools their staff are using. Microsoft Purview, manual discovery exercises, and internal audits can reveal shadow IT. But shadow AI—especially free consumer tools—is nearly invisible. Employees use these tools on personal devices, cloud accounts, or approved platforms (like Excel or Teams) where AI features are built-in but not obvious.

Result: You can't govern what you don't know exists. Most municipalities have zero visibility into their AI landscape.

2. Policy and Governance Structure

A governance framework requires:

- Clear approval processes for AI tool adoption

- Defined roles and accountability — who's responsible for AI governance?

- Risk-based classification — which AI uses are high-risk, medium-risk, low-risk?

- Transparency requirements — when is AI use disclosed to the public?

- Human oversight mechanisms — what decisions require human review?

Most municipalities have none of these. The absence isn't malicious—it's simply that governance moved slower than adoption.

3. Data Protection and Compliance

Shadow AI tools often lack:

- Data residency controls — where is municipal data stored and processed?

- Contractual protections — do vendor terms allow data use for model training?

- Access controls — who can see sensitive information?

- Encryption and security standards — are audit trails maintained?

- Privacy impact assessments — has the tool been evaluated for PIPEDA, provincial privacy laws, and emerging AI regulations?

Employees using unapproved tools may unknowingly expose personal information about residents, confidential financial data, or strategic municipal decisions to external AI providers.

4. Audit and Accountability

Without governance frameworks, municipalities lose:

- Audit trails — who used which tool, when, with what data?

- Decision documentation — why was this AI recommendation accepted or rejected?

- Bias testing records — have AI systems been evaluated for fairness?

- Performance monitoring — is the AI system still producing reliable results?

- Breach response capabilities — if data was exposed, what happened, and how was it contained?

The result is a governance vacuum where accountability disappears.

The 2026 Inflection Point: Agentic AI Raises the Stakes

The Shadow AI governance challenge is about to become far more urgent. In 2026, the AI industry is shifting from conversational tools (chatbots you type into) to agentic AI systems (autonomous agents that make decisions and take actions).

Gartner and industry analysts predict that agentic AI will proliferate in government in 2026 [6]. These systems will handle:

- Citizen services — virtual assistants managing service requests, benefits, permits

- Internal operations — scheduling, resource allocation, decision-routing

- Compliance and auditing — flagging regulatory violations, generating reports

- Public safety and planning — predictive analysis, resource deployment

The governance implications are profound. When AI systems operate autonomously:

- The "black box" problem becomes critical — who can explain why a system denied a permit or flagged a resident?

- Accountability becomes murky — if an AI makes a decision that harms a citizen, who is responsible?

- Auditability becomes non-negotiable — every decision must be traceable, explainable, and reviewable by humans

- Risk concentration increases — a single faulty model can impact thousands of citizen interactions simultaneously

A municipality that hasn't solved its governance gaps today will be dangerously exposed when it deploys autonomous AI systems tomorrow.

What Responsible Municipal AI Governance Looks Like

Leading municipalities and frameworks—including the IVADO AI for Municipalities guide, the U.S. GAO AI Accountability Framework, Canada's proposed AIDA, and best practices from cities like Seattle—outline a coherent governance model [7]. It operates across four pillars:

Pillar 1: AI Literacy

All municipal employees, elected officials, and decision-makers need to understand how AI systems work, what risks they introduce (bias, accuracy, security), and when AI use is appropriate. This isn't about making everyone a machine learning engineer. It's about creating a shared understanding so staff can make responsible decisions.

Action: Implement mandatory AI literacy training for all staff, with tailored content for leadership and data handlers.

Pillar 2: Data Management and Protection

A robust data governance framework ensures data mapping, classification, access controls, and contractual protections.

Action: Conduct a data discovery exercise to map crown jewel data. Implement access controls that restrict which AI tools can interact with sensitive data.

Pillar 3: Governance Structure and Oversight

Establish an AI governance committee, a designated AI officer, approval processes, and a registry of approved tools.

Action: Create an AI governance committee, establish approval workflows, and maintain an inventory of all AI tools in use.

Pillar 4: Transparency and Public Accountability

Municipalities must be transparent about where AI is being used, how decisions can be appealed, and what safeguards exist against bias.

Action: Develop a public-facing AI transparency statement. Require that AI use in high-impact areas be disclosed to residents.

The Roadmap: How Municipalities Can Close the Gap In 2026

Implementing responsible AI governance doesn't require months of delay. The NIST AI Risk Management Framework and best practices from leading municipalities suggest a phased approach:

Phase 1: Assessment and Discovery

Weeks 1-4

Conduct risk assessments, identify shadow tools, map crown jewel data, and document regulatory requirements.

Phase 2: Policy Development

Weeks 5-8

Establish AI governance committee, draft use policies, define accountability, and create risk-based classification frameworks.

Phase 3: Implementation

Weeks 9-16

Establish approved tool lists, implement access controls, deploy monitoring mechanisms, and launch AI literacy training.

Phase 4: Monitoring and Improvement

Ongoing

Monitor for new shadow AI, evaluate system performance, update policies, and maintain audit trails.

This isn't a multi-year initiative. It's a disciplined, four-month program that positions municipalities to govern AI responsibly before agentic systems become widespread.

The Case for Sovereign AI Governance Tools

Canada is increasingly moving toward sovereign AI—solutions ensuring data residency and regulatory alignment. Rather than relying on international providers with unclear compliance, municipalities can adopt platforms built for Canadian laws.

A sovereign approach ensures:

- Data Residency — Data stays within Canadian borders.

- Regulatory Alignment — Compliance with PIPEDA, provincial privacy laws, and emerging AIDA requirements.

- Governance Transparency — Decision-making remains under Canadian control.

TrueNorth Civic AI: A Safe First Step

TrueNorth Civic AI is not a governance enforcement platform. It is a secure, sovereign AI tool designed to be your municipality's approachable first step into the AI world.

We offer a context-aware, safe environment grounded in municipal legislation, allowing staff to innovate without the risks of shadow AI. By providing a compliant alternative to consumer tools, TrueNorth helps you close the governance gap naturally.

- check_circleGrounded in Canadian Municipal Legislation

- check_circleFull Data Residency & Privacy Protection

- check_circleContext-Aware & Role-Based Security

References

- Diligent, "AI in Public Sector Governance: A Board's Essential Guide," 2026

- MNP, "How can local governments implement effective cybersecurity governance frameworks for AI," 2025

- Obsidian Security, "Why Shadow AI and Unauthorized GenAI Tools Are a Threat," 2025

- CloudGate.ai, Survey of Enterprise SaaS and AI Application Governance, 2025

- Ontario Government, "Bill 194: Artificial Intelligence Regulation," 2024

- Government of Canada / SAS, "Government AI Predictions 2026," 2025

- IVADO, "Artificial Intelligence Serving Municipalities: Guide for Responsible Implementation," 2025; U.S. GAO, "AI Accountability Framework for Federal Agencies," 2023; City of Seattle, "2025-2026 AI Plan," 2025